We are living in a time where superintelligence is no longer science fiction, and Elon Musk is projecting that robots will be cleaning dishes in our homes. Behind all of this (the chatbots, the search engines, the content generators, the autonomous systems) are large language models. And what fuels those models? High-quality datasets specifically designed for language understanding and generation.

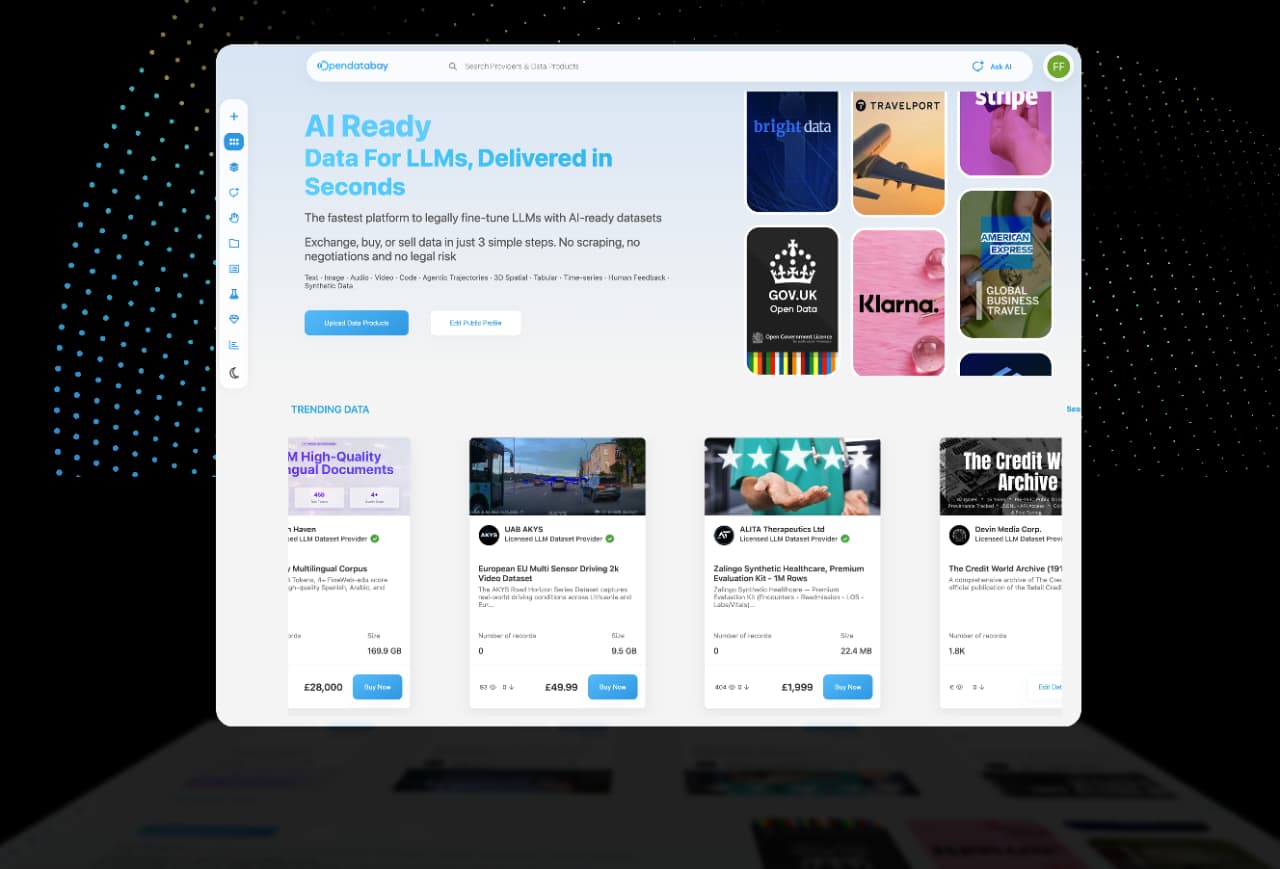

These specialised datasets (known as LLM datasets) play a critical role in training and fine-tuning AI models so they can perform accurately in real-world scenarios. Platforms like Opendatabay give developers and organisations access to curated datasets they can use to enhance their AI models.

This article covers what LLM datasets actually are, where to find them on Opendatabay, and how to use them effectively to support AI development.

What Are LLM Datasets?

LLM datasets are collections of textual data used to train and fine-tune large language models. These datasets can include question-and-answer pairs, conversation transcripts, instruction-based prompts, domain-specific documents, and structured knowledge in text form.

Unlike general text data, LLM datasets are typically structured and optimised for machine learning workflows. They help models learn how to interpret context, generate responses, extract information, and reason through complex tasks.

Common applications for LLM datasets include fine-tuning AI models for niche industries (like legal, finance, or healthcare), building Retrieval-Augmented Generation (RAG) systems that combine search with language models, powering conversational AI like chatbots and virtual assistants, and developing generative content creation tools.

Where to Find LLM Datasets

Finding the right dataset is one of the most important steps in AI development. Opendatabay (https://www.opendatabay.com/data/premium) provides a structured marketplace where developers and organisations can discover datasets built specifically for AI and machine learning use cases.

These datasets may include:

- Conversational models instruction-tuning dataset.

- The datasets of domain-specific knowledge.

- NLP collections of annotated texts.

- Knowledge based AI system dataset in the form of question-answer.

The ability to sort datasets by category, use case, or data format is one of the primary benefits of a structured marketplace, and a developer can easily find the perfect fit with all the data they need.

Tips for Using LLM Datasets Effectively

Getting access to a dataset is just the first step. To actually get the most out of LLM datasets in AI workflows, developers should follow a few best practices.

- Preprocess the Data

Datasets should always be preprocessed before being used for training or fine-tuning. This can include removing duplicates or redundant entries, cleaning up formatting inconsistencies, standardising text structures, and filtering out low-quality or irrelevant content.

Proper preprocessing ensures the dataset actually adds value to model performance rather than introducing noise.

- Understand Licensing Terms

Dataset licensing determines exactly how the data can be used (whether that’s for research, internal development, or commercial AI applications). Developers need to be clear on the licensing conditions before putting any dataset to work, otherwise it can lead to legal trouble down the line.

Opendatabay provides clear documentation on licensing structures for AI training and fine-tuning datasets:

https://docs.opendatabay.com/ai-training-licenses/general-ai-training-and-fine-tuning-data-license

Clearly licensed data assists developers to proceed with the incorporation of datasets into their project.

Here’s the rewrite:

- Integrate Datasets with Your Model Pipeline

Once you’ve selected and licensed a dataset, the next step is getting it into your training pipeline. This usually means converting the data into a format compatible with your framework of choice, whether that’s PyTorch, TensorFlow, Vector embeddings, or another ML library.

Common tasks at this stage include organising prompts and responses for instruction tuning, splitting datasets into training and validation sets, and running evaluations to test how the fine-tuned model performs against your benchmarks.

Why Use Opendatabay for LLM Data

Opendatabay is built to remove the friction that slows down AI teams when sourcing training data.

Advanced Filtering. Search and filter datasets by industry, data type, language, or AI application so you find what’s relevant without digging through irrelevant listings.

Clear Licensing. Every dataset comes with explicit licensing terms, so your team knows exactly how the data can be used for model training, fine-tuning, and deployment.

Curated Quality. Datasets are organised, documented, and verified before listing, which means less time cleaning raw data and more time building.

Specialised Data. From domain-specific corpora to instruction-tuning pairs, the marketplace surfaces data that’s structured for how modern AI teams actually work.

Whether you’re training a chatbot, building a domain-specific language model, or developing a RAG-based search assistant, the quality of your dataset directly shapes the performance of your model. Opendatabay streamlines the discovery, evaluation, and licensing of that data so developers can focus on what actually matters , building better models.